Part of the I, ROBOT series

Isaac Asimov made some very interesting thought experiments about Artificial Intelligence and the world it creates in I, Robot. But thought experiments aren’t predictions, and what’s most noticeable about the book now, 68 years after its first publication, is just how little relevance it has to our current dilemmas about AI and automation.

In Asimov’s collection of stories, the technology for AI (“Positronic Brains”) is restricted – that is to say, only one company actually knows how to make them, and it’s such a fiendishly complicated procedure that no one else in the world (literally) has been able to hack or reverse engineer the process. So one group of people with a clear hierarchy are responsible for the entirety of how AI is programmed and enters the world.

As a result, AI in Asimov’s stories is centralized: there are clear decision making protocols around how things are programmed, and standards can be decided, enforced, and people held accountable. It’s easy to hold people accountable in Asimov’s stories, because there’s a single world government with legal authority and common laws – it’s not just AI that’s centralized, it’s the whole world.

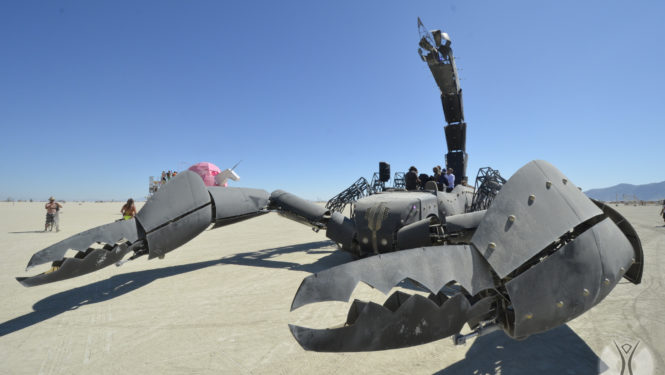

But even if it weren’t, AI would still be easy to keep an eye on. To make a positronic brain in I, Robot requires a process far more like a massive car factory with a 5,000 part assembly line than it does a coder working alone in a coffee shop or at a hacker space with a bunch of friends. Artificial Intelligence in Asimov’s imagination is industrial, and takes up space accordingly.

Asimov’s vision of a tech company doesn’t offer free products to its users in order to get information about them, it sells them products that are designed to meet their needs as much as possible and for nothing to go wrong. This is in no small part because such industrial corporations can be easily regulated: the company in I, Robot lives in constant fear that the earth government will add greater restrictions on its products, even forbidding them from being sold on or off-world (depending on the story). There’s no mention or thought of a military-industrial complex, and if this company even has a lobbyist it’s never mentioned.

It is because of all these conditions – AI as restricted, centralized, industrial, and easily regulated – that the universal adoption of Asimov’s famous “Three Laws of Robotics” are possible. The very idea of someone else coming along and programming robots without them is unthinkable – the one time in these stories that the laws are even tweaked, it’s a massive scandal requiring a joint corporate/military cover-up at the highest levels. And it is those laws of robotics that keep AI’s and robots from turning on the human race, or doing it significant harm.

Unfortunately, none of the premises Asimov based his stories on are true. In fact, the technology has gone exactly the other way.

Far from being a restricted technology, AI is ubiquitous – every industry uses it, a whole lot of code is freely available online, and individual programmers on a beach or in a hacker house can design them about as well as top funded research labs. This means that in the real world, AI is decentralized – and being decentralized makes it nearly impossible to regulate. So far there is no combination of companies, governments, or civic groups that can control where the technology goes next. Somebody – anybody – can always decide to build their code like a mad scientist. They might even get first round funding for it.

Tech companies today bear little resemblance to the massive industrial conglomerates of Asimov’s imagination. AI in our world is portable and abstract: it isn’t best symbolized by heavy machinery but by intangible symbols. And where the positronic brains of I, Robot clearly serve human beings, the real AI of the 21st century exist to harvest our information and use it to market to us – activities that might not even be allowable under the Three Laws of Robotics. Your AIs can’t be said to be looking out for you if they’re also spying on you for profit, can they?

We have therefore reached a point where the most famous thought experiment in managing AI safely for human beings has been rendered impossible by the actual conditions in which AI exist.

It’s difficult to see how there could ever be universal “laws of robotics” under the conditions we now live in. The thought experiments Asimov conducts through fiction are therefore clever, but not really relevant. Far from regulating AI, we are much closer to AI regulating us. Far from having a clear set of parameters in which AI will operate, it’s the wild west for programming – and very likely that whatever can be programmed will be programmed.

There is one prediction Asimov made that is shared by the most fervent proponents of AI today: that it will save us. The final story in I, Robot concerns the way in which thinking machines, having been given control over all the world’s industrial output, will start to make decision in our own interests without our own consent – and that it will (likely) lead to a golden age. This is exactly the prediction made by proponents of the Singularity and technophiles who believe that we’ll be better off once we can turn decision making over to the thinking machines that are just around the corner.

But the lesson of Asimov to our era is the way he was wrong. It turns out, in the world of I, Robot, that humans did all the hard work first by making a world in which the artificial could be applied intelligently.

Dear Caveat and Burning Man Creators:

It is my hope to be able to attend, participate in, and contribute to Burning Man this year. That has not been possible for me in the past. This year (I hope) will be different. I am working to learn about how I can help Burning Man be a little better by virtue of my presence.

Caveat Magister’s writings about AI are thought provoking and loudly ring all too true. Though I am certainly not a deep thinker and sometimes wonder if I have ever actually had an original thought in my entire short stay on this little round ball of ours, I did want to ask your thoughts about these two questions, after reading Caveat’s last few posts:

Is it fair to describe Burning Man at BRC as anarchy?

And could it be said that in a bizarre sort of way, that’s kind of what AI actually is – Anarchy Intelligence?

Thank you for Burning Man.

Report comment

“Is it fair to describe Burning Man at BRC as anarchy?”

I think Caveat already addressed this here:

https://journal.burningman.org/2017/11/opinion/serious-stuff/you-got-your-rules-in-my-freedom-you-got-your-freedom-in-my-rules/

Report comment

I’m always thrilled when Caveat posts something new to read. Thanks, buddy.

Report comment

AI’s may exhibit superior intelligence and efficiency, but they will lack true inner experience. Like the AI in Ex Machina that convinced Caleb that she was in love with him, so too, a clever AI may behave as if it is conscious. Fakin’ Love! Not feelin’ Love!

I guess it might just come down to carbon based consciousness or silicon based consciousness – is it possible? Could it (silicon based consciousness) feel the burning curiosity/desire to build art and feel the same to burn it down, or the pure stillness joy of a sunrise?…truly though – Or is it just programs running in the background – kind of the like we humans run around today – but when we experience the warm hues of sunlight, or hear someone laughing uncontrollably in the distance there is a felt quality to our inner lives. We are conscious. We are aware at our core – In an extreme, horrifying case, humans could upload their brains, or slowly replace the parts of their brains underlying consciousness with silicon chips to be more efficient like computers, and in the very end, only non-human animals remain to truly experience the world. This would be an unfathomable loss. Even the slightest chance that this could happen should give us reason to think carefully about AI consciousness. It will be in the hands of the mad scientists I guess. Although, still mad scientists created the cannon which led to well…no guns on the playa

Report comment

A fascinating article, but I disagree with the conclusion, as there is some confusion over the word “AI”.

The AI in Asimov’s story have nothing to do with today’s AI. Today’s AI are not, in anything but the vaguest terms intelligent.

Asimov’s AI’s were not just General AI, but also Sentient, two levels above today’s AI. We have yet to create a General Intelligence.

One other minor nit-pick: Amazon’s shopping algorithms are pretty close to industrialized; there are entire teams of PhD’s working on them. IBM and Google have similar legions of workers, but that’s a quibble, there certainly are AI software packages on Github.

The problem is that the marketing folks have gotten ahold of the term AI and that confuses everything.

We have yet to see a single true AI.

When we do, perhaps Asimov will be terribly relevant. I certainly hope so. A fully generalized intelligence without Asimov’s 3 laws would be a bit of a terror.

== John ==

Report comment

Hi John:

Excellent points.

I’d also note that we delved more deeply into what the “intelligence” in “Artificial Intelligence” could really mean in an early post in this series: https://journal.burningman.org/2018/01/philosophical-center/the-theme/what-is-it-we-think-humans-can-do-that-robots-cant/

Hope you enjoy!

Report comment

Im less worried about the intentions of the robots than the capitalists who built them!

Report comment

AI is considered to be a digitally controlled computer robot, performing tasks/programs commonly associated with humans. Programmer’s programming program’s as it seems. AI it seems has been grouped in with the intellectual process characteristics of us, such as the ability to reason, discover meaning, admire, generalize or to just genuinely complain just because. The tasks we give to AI’s are program’s that carry out very complex tasks – discovering proofs for math theorems, play some chess, do medical diagnosis, computer search engines…nice, voice/hand recognition…sheesh! there’s tons! The computer processing speeds and the memory capacities are faster than ever, but are there any programs that truly mimic the daily beautiful flexibility of us. Is there a program for everyday tasks that we decide to do or even decide not to do. But is it then still a program then. Somehow, our true awareness of the present experience that we are, seems to be the missing program for AI to gain some ground and act out against…

Report comment

Comments are closed.